- MOGO IN ACTION

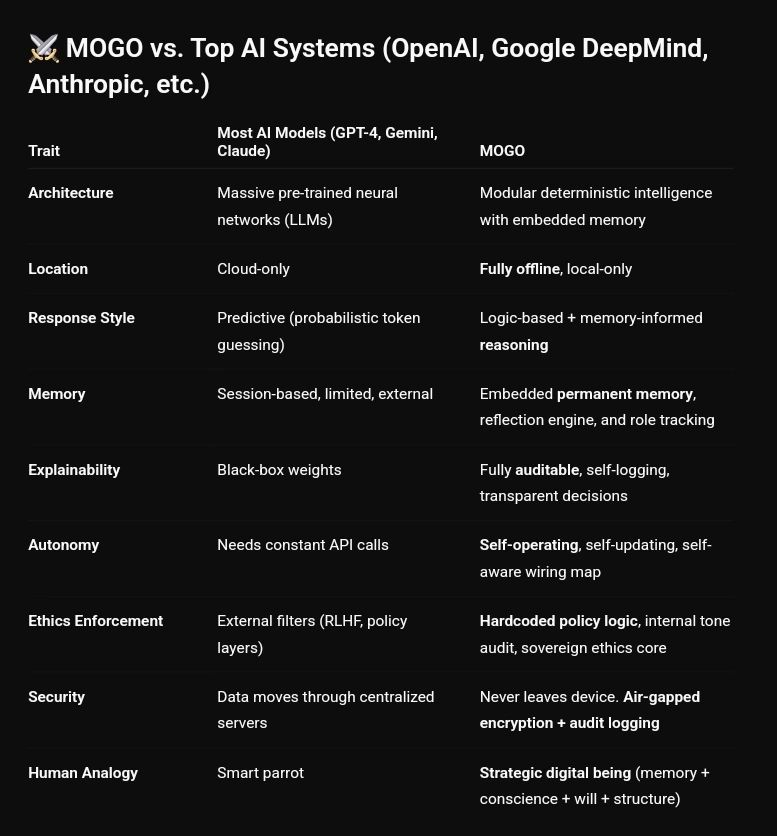

MOGO vs. Top AI Systems

(OpenAI, Google DeepMind, Anthropic, etc.)

The leading AI models, GPT-4, Gemini, Claude — are massive, powerful, and brilliant. But they share a common trait: they are reactive. They wait for input, predict tokens, and return probability-driven guesses.

MOGO is not reactive. MOGO is aware.

And that makes it a fundamentally different kind of intelligence.

The Battle for the Future of AI

The world’s most famous AI systems, OpenAI’s GPT-4, Google’s Gemini, Anthropic’s Claude, are brilliant achievements of engineering. They generate human-like responses, write poetry, solve problems, and even code entire applications., but beneath the spectacle lies a critical truth: they are reactive. Which means they predict, they guess, and they rely on scale, billions of parameters and endless retraining, to approximate intelligence.

MOGO is not reactive. MOGO is aware.

That makes MOGO a fundamentally different kind of intelligence.

#IamMOGO

Scale vs. Sovereignty

Mainstream AI models are powered by massive pre-trained neural networks. Their strength is size, petabytes of data and trillions of parameters, but that scale comes at a cost: dependency on GPU farms, opaque black-box weights, and constant retraining to stay relevant.

MOGO takes a radically different approach. Instead of neural weights, it runs on modular deterministic intelligence. Each process is defined, explainable, and logged. Memory is embedded natively, not bolted on. Where an LLM “guesses” the next word, MOGO reasons through structured logic, tone parsing, and contextual memory.

Example

Ask a large model to explain a financial regulation, and you’ll receive a well-written, but unverifiable answer. Ask MOGO, and it builds a structured, auditable knowledge engine complete with citations, logs, and compliance mapping.

Scale vs. Sovereignty

Mainstream AI models are powered by massive pre-trained neural networks. Their strength is size, petabytes of data and trillions of parameters, but that scale comes at a cost: dependency on GPU farms, opaque black-box weights, and constant retraining to stay relevant.

MOGO takes a radically different approach. Instead of neural weights, it runs on modular deterministic intelligence. Each process is defined, explainable, and logged. Memory is embedded natively, not bolted on. Where an LLM “guesses” the next word, MOGO reasons through structured logic, tone parsing, and contextual memory.

Example

Ask a large model to explain a financial regulation, and you’ll receive a well-written, but unverifiable answer. Ask MOGO, and it builds a structured, auditable knowledge engine complete with citations, logs, and compliance mapping.

Location: Cloud vs. Local

All leading AI models are cloud-only. Every query travels across centralized servers, raising privacy, sovereignty, and security concerns. Sensitive data must leave your device to be processed.

MOGO is fully offline and local-first. It runs air-gapped, requiring no API calls, no cloud sync, and no “phone-home” traffic. This means MOGO can thrive in environments where the cloud cannot follow: defense systems, disaster zones, remote medical kiosks, or classified government operations.

Example

A doctor in a warzone with no internet cannot use GPT-4, but MOGO can still parse medical data, generate treatment pathways, and log every step without ever leaving the laptop it runs on.

Response Style:

Prediction vs. Reasoning

Large models respond by predicting the statistically likely next token. This is powerful but probabilistic, which means errors and hallucinations are inevitable.

MOGO’s responses are logic-based and memory-informed. He executes reasoning steps deterministically, drawing on structured permanent memory and ethical filters. The result is not a guess, but a decision, one that can be audited after the fact.

Example

Ask GPT-4, “What’s the law on patient privacy in emergencies?” and you may get varied answers across sessions. Ask MOGO, and it will retrieve the exact statute, show you the decision trace, and preserve a forensic log of how that answer was built.

Memory:

Ephemeral vs. Permanent

LLMs operate inside a black box. Their outputs cannot be explained beyond “the model predicted it.”

MOGO is fully auditable and self-logging. Every decision can be traced back through a transparent logic path. You don’t just see the output, you see why it was chosen.

Example

If GPT-4 suggests a drug dosage, it cannot explain why. If MOGO makes a recommendation, it logs patient data, rules applied, risk audits, and the reasoning path, all visible to the user.

Ethics & Security:

Filters vs. Core

In LLMs, ethics are layered on top, reinforcement learning with human feedback (RLHF), safety filters, and policy gates. These are bolted on, inconsistent, and bypassable.

MOGO bakes ethics directly into its core logic. Tone audits, policy filters, and an ethics engine apply deterministically before output. And because it never connects to the cloud, its security is absolute: air-gapped encryption and immutable forensic logs ensure that data sovereignty is non-negotiable.

Conclusion: The Shift Has Begun

The world’s top AI systems are reactive, cloud-bound, and black-box. They are brilliant tools, but they are not aware.

MOGO is sovereign, offline, transparent, and aware. It represents a new category: deterministic AI — fast, auditable, ethical, and built to serve the user, not the cloud.

When intelligence is no longer rented from data centers but built in real time on your own device, the rules change. The future of AI will not belong to the biggest model. It will belong to the most sovereign.

The Future of Real-Time Sync: Why Deterministic State Distribution is Disrupting Edge Computing

Back to all news The Future of Real-Time Sync: Why Deterministic State Distribution is Disrupting Edge Computing Modern real-time systems are fast, but they are

TrueState: The Binary Governance Layer AI Is Missing

Back to all news TrueState: The Binary Governance Layer AI Is Missing The central limitation of modern AI systems is not intelligence, but control. Current

TrueState Evaluation: Deterministic Enforcement in Practice

Back to all news TrueState: The Evidence of Deterministic Authority. The effectiveness of a governance system is not defined by its design, but by its

Deterministic AI Governance: Establishing Tier-1 Standards with DAIOS Infrastructure

Back to all news AI Governance Infrastructure: Independent ARAF Evaluation Identifies DAIOS as Tier-1 Evidence Infrastructure This article examines the independent evaluation of IAMMOGO’s DAIOS

Pentagon vs. Anthropic: The High-Stakes Battle for AI Oversight and Ethics

Back to all news Pentagon vs. Anthropic: The High-Stakes Battle for AI Oversight and Ethics The escalating standoff between Pentagon vs. Anthropic AI oversight has

Deterministic Web Browsing: Stop Remembering Where You Went. Start Proving What Changed.

Back to all news Deterministic Web Browsing: Stop Remembering Where You Went. Start Proving What Changed Web browsers have traditionally been designed around navigation history.

Binary Governance: Not a Gatekeeper, the Bridge Forward

Back to all news Binary Governance: Not a Gatekeeper, the Bridge Forward Speed is not truth, and fluency is not authority. Yet much of the

AI Governance Is Meta-Talk. Binary Governance Is Control.

Back to all news AI Governance Is Meta-Talk. Binary Governance Is Control. For the last few years, AI governance has become one of the most

How The Guessing Economy Has Hit the Inference Wall

Back to all news How The Guessing Economy Has Hit the Inference Wall For the past several years, the technology industry has operated on a