For the past several years, the technology industry has operated on a powerful assumption: that scale would eventually resolve uncertainty. More data, larger models, denser GPU clusters, and deeper neural stacks were expected to smooth out errors, close reasoning gaps, and turn probabilistic systems into something functionally reliable.

That assumption is now under strain.

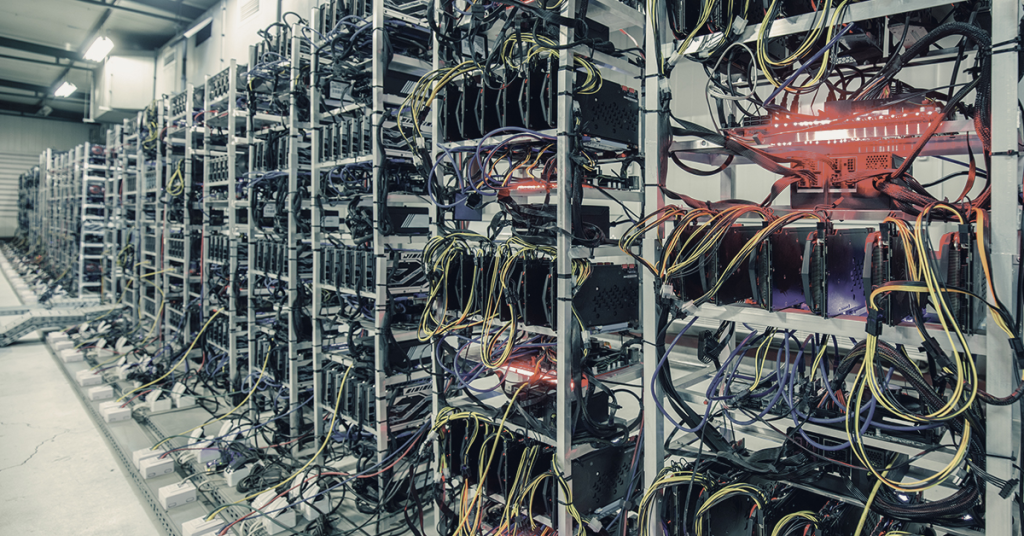

By early 2026, the costs of scaling inference-based systems have become impossible to ignore. Data center expansion has accelerated at record pace. GPU infrastructure is absorbing unprecedented capital. Cloud spending continues to climb even as error rates plateau rather than disappear. Despite the investment, machines still produce incorrect outputs, require human oversight, and depend on extensive monitoring and remediation layers to remain usable.

This is what we mean by the Inference Wall.

The issue is not a lack of intelligence or effort. It is structural. Systems built on probabilistic inference do not converge toward certainty as they scale. They converge toward faster approximations. No amount of additional data or compute changes the underlying fact that these systems are guessing, however sophisticated the guess may be.

As a result, the industry has quietly reorganized itself around managing failure after execution. Observability, logging, audits, compliance workflows, human review, insurance, and litigation are no longer temporary safeguards. They are permanent components of the stack, required to contain the consequences of machines that are allowed to act before correctness is assured.

This article examines how the guessing economy reached the Inference Wall, why scaling laws stopped delivering the outcomes they promised, and why a different class of systems is emerging in response. Not systems designed to guess better, but systems designed to act only when correctness can be proven in advance.

The Guessing Economy in Modern AI Systems

Modern AI systems are built on inference. Given an input, they calculate the most statistically likely output based on prior data. The result is not a decision in the traditional sense, but a probability-weighted guess. Whether the system is generating text, routing traffic, approving transactions, or controlling physical machinery, the underlying mechanism is the same: approximate the correct answer closely enough to be useful.

This approach has proven powerful and profitable.

It is also the foundation of what can be described as the guessing economy.

In the guessing economy, accuracy is not defined by correctness, but by acceptability. Systems are tuned to be right often enough that their failures can be tolerated, managed, or corrected after the fact. A recommendation engine that misfires occasionally is acceptable. A fraud detector that flags the wrong transaction is inconvenient but survivable. Even autonomous systems are permitted to operate under the assumption that errors will be rare rather than impossible.

The economic logic follows naturally. Because inference-based systems cannot guarantee correctness, industries have grown around containing their failures. Monitoring platforms observe behavior after execution. Logging systems record what went wrong. Human review teams arbitrate edge cases. Compliance frameworks document processes rather than prevent error. Insurance and litigation absorb the residual risk.

These costs are not incidental. They are structural. The more guessing systems are deployed, the more infrastructure is required to manage their mistakes. “Good enough” accuracy becomes the operating standard not because it is ideal, but because it is the only level of certainty inference can economically deliver.

Crucially, this is not a temporary phase. It is the business model. As long as machines are allowed to act before correctness is known, failure management remains a permanent line item. Scaling improves performance, but it does not remove the need for remediation. It simply increases the speed and volume at which guesses are made.

The guessing economy is therefore not a failure of ambition or investment. It is a rational response to systems that approximate reality rather than enforce it. Understanding that distinction is essential before examining why scale has begun to fail as a remedy and why the industry is now confronting the limits of inference itself.

The AI Scaling Law Illusion and the Inference Wall

For much of the last decade, progress in artificial intelligence appeared to follow a simple formula. More data improved performance. More GPUs reduced latency. Larger models produced more coherent outputs. As systems grew in size and complexity, reasoning-like behavior seemed to emerge naturally from scale itself.

This belief became known as the scaling law narrative. If intelligence was an emergent property, then the path forward was obvious. Invest in larger data centers. Train on broader datasets. Add layers. Expand parameter counts. Errors, it was assumed, would gradually be trained away.

For a time, the results supported the story. Early gains were real. Models became more fluent, more capable, and more versatile. Enterprises adopted them rapidly, convinced that continued scaling would convert approximation into reliability.

What followed was not convergence, but saturation.

As scale increased, cost curves began to bend sharply upward. Each additional gain in performance required disproportionate investment in compute, energy, and infrastructure. GPU clusters grew denser and more expensive. Data center footprints expanded globally. Training cycles lengthened. Operating costs rose faster than accuracy improved.

At the same time, error rates stopped falling in meaningful ways. Mistakes became more subtle rather than less frequent. Hallucinations persisted. Edge cases multiplied. Systems performed impressively in aggregate while still failing unpredictably in specific, high-consequence scenarios.

Latency, energy consumption, and legal exposure began rising together. Faster inference required more power. Larger deployments increased blast radius. As systems were trusted with more autonomy, the cost of failure escalated from inconvenience to liability. Monitoring, compliance, and human oversight layers expanded in parallel, offsetting many of the gains scale was meant to deliver.

This is the point where the scaling law narrative breaks down.

The industry has reached what can be described as the Inference Wall. Beyond this point, additional data and compute no longer reduce uncertainty in proportion to their cost. They accelerate approximation, but they do not eliminate guessing. Performance improves incrementally while complexity, risk, and expense compound.

The Inference Wall is not a temporary bottleneck or an optimization problem waiting to be solved. It marks a structural limit of systems whose decisions are based on probability rather than admissibility. Understanding this limit is essential before examining what, if anything, exists beyond it.

Why Guessing Cannot Scale Past the Inference Wall

The limitations of inference-based systems are often framed as performance problems. If models hallucinate, the assumption is that they need more data. If outputs are unreliable, the solution is thought to be larger architectures or additional layers of reasoning. These responses treat guessing as an implementation flaw rather than what it actually is: a categorical property of the system.

Guessing is not a performance issue, it is a category error.

Inference-based systems do not decide whether an action is correct. They estimate which outcome is most likely given prior data. This distinction matters because probability, no matter how refined, does not become certainty through scale. A system can grow larger, faster, and more sophisticated while still operating under the same fundamental constraint. It cannot know that an output is correct. It can only assign confidence to its guess.

This remains true regardless of model size. Adding parameters increases representational capacity but does not change the decision mechanism. More layers introduce additional transformations, not authority. Larger datasets improve coverage but do not eliminate ambiguity. At every scale, the system produces outputs that must be evaluated after execution rather than validated before it.

As a result, correctness is always determined post hoc. Outputs are checked against reality only after they occur. When errors surface, they are mitigated through retries, overrides, audits, or human intervention. These processes do not correct the system’s decision logic. They compensate for its inability to guarantee admissible behavior in advance.

This is why additional layers fail to resolve the problem. Reasoning modules, self-reflection loops, and ensemble architectures still rely on inference at their core. They rearrange how guesses are produced and evaluated, but they do not alter the fact that the system is allowed to act without proof of correctness.

Scaling improves efficiency. It improves fluency. It improves the appearance of intelligence. What it does not do is convert approximation into authority. As long as machines are permitted to guess, they require supervision, remediation, and containment. No amount of scale changes that requirement. It only increases the cost of maintaining it.

The Financial Bloodbath of the AI Inference Wall

Once the limits of inference are reached, the costs do not stabilize, they multiply.

The most visible expense is infrastructure. Data center expansion has accelerated as organizations race to support larger models and higher inference throughput. GPU capital expenditure has become a dominant line item, with clusters purchased not just for training, but to maintain acceptable latency at scale. These investments are front loaded, energy intensive, and irreversible, yet they do not eliminate uncertainty. They only enable faster guessing.

Cloud spending compounds the problem. As inference workloads grow, so does persistent compute burn. Systems that must continuously evaluate, retry, monitor, and log their own outputs generate permanent operational cost. Efficiency gains achieved through optimization are quickly absorbed by increased usage, broader deployment, and higher expectations of availability.

Beyond infrastructure, the cost of failure spreads laterally across the organization. Legal exposure rises as systems are entrusted with decisions that affect customers, finances, and safety. Errors that were once tolerated as software bugs become liability events. Litigation, settlements, and regulatory scrutiny follow, adding unpredictable financial risk to already volatile operating budgets.

Compliance overhead expands in parallel. Documentation, audits, reporting frameworks, and internal controls grow to compensate for systems that cannot guarantee correct behavior at execution time. These processes do not reduce the probability of error. They exist to demonstrate due diligence after errors occur.

Human override layers complete the picture. Teams are hired to supervise outputs, review edge cases, and intervene when systems fail. These roles are not temporary scaffolding. They become permanent dependencies, required to keep inference-based systems usable in real environments. Each new layer of oversight increases coordination cost without addressing the root cause.

The pattern is consistent. Every failure mode adds cost, not resolution. Infrastructure absorbs scale. Compliance absorbs risk. Humans absorb uncertainty. None of these layers reduce the need for guessing. They simply make its consequences manageable at higher expense.

This is the financial reality behind the inference wall. As systems scale, they do not converge toward stability. They accumulate costs in order to contain behavior that cannot be guaranteed in advance. The result is an industry spending more to maintain systems that remain fundamentally uncertain.

DAIOS and Deterministic Systems Beyond

the Inference Wall

DAIOS represents a fundamentally different class of system. It is not an AI governance layer, a safety wrapper, or a monitoring framework layered on top of inference. It does not attempt to improve probabilistic outputs, reduce hallucinations, or explain decisions after they occur. DAIOS removes guessing as a valid execution mechanism altogether.

Inference-based systems follow a consistent pattern. They decide first and explain later. An output is produced based on probability, then evaluated after execution through logging, review, or correction. Even when safeguards exist, the system is permitted to act before correctness is known. Authority is external, deferred, and optional.

DAIOS inverts this structure.

Instead of producing an output and managing its consequences, DAIOS evaluates admissibility before execution. Every proposed machine action is tested against three categories of constraint: physical reality, mathematical consistency, and ethical admissibility. These constraints are not advisory. They are enforced conditions for state transition.

If a proposed state cannot be proven admissible under these constraints, it does not occur. The state is non-representable within the system. There is no rollback, because nothing has been executed. There is no mitigation, because no failure has taken place. There is no override after the fact, because authority is resolved before action, not applied retroactively.

This distinction is critical. DAIOS does not attempt to make machines smarter or more confident. It does not rely on improved prediction or higher probability thresholds. It removes the assumption that machines are allowed to guess at all. Actions either meet admissibility requirements or they do not happen.

The result is not better inference. It is the absence of inference as a decision mechanism. Where guessing systems operate by approximation and correction, DAIOS operates by permission. Authority is not a layer added to the stack. It is the condition under which the stack is allowed to act.

In this sense, DAIOS does not compete with inference-based systems on intelligence. It defines a different boundary for machine behavior. It replaces post hoc governance with execution-time authority, and in doing so, ends the category of systems whose behavior must be managed after the fact.

After the Inference Wall:

Authority as the Only Exit

The inference wall is not a temporary scaling problem or a transitional phase in model development. It marks a terminal boundary for systems whose behavior is governed by probability rather than admissibility. Beyond this boundary, additional data, compute, or architectural complexity no longer changes the nature of machine decision making. It only increases the cost of managing its consequences.

This limitation becomes decisive once systems act autonomously. When machines are permitted to execute actions in the physical world, financial systems, or critical infrastructure, post hoc correction loses its meaning. A plane crash cannot be audited back into safety. A data breach cannot be logged into prevention. A medical error cannot be explained into reversal. Once action occurs, the outcome is already real.

This is why authority cannot be treated as a feature or an add on. In autonomous systems, authority is the operating condition. Either the system is permitted to act only within proven admissible boundaries, or it is allowed to guess and accept the consequences later. There is no middle state that scales safely.

DAIOS reframes this boundary explicitly. It does not promise better predictions or fewer errors through refinement. It establishes authority as the prerequisite for execution. Actions are not evaluated after they occur. They are permitted only if they can be proven admissible in advance. In this model, failure is not mitigated. It is prevented by making unsafe states impossible to enter.

The implication is not incremental. The industry is converging toward a bifurcation. One class of systems will continue to scale inference, accepting increasing cost, oversight, and liability in exchange for flexibility and speed. The other class will adopt deterministic authority, refusing unsafe states by design and limiting behavior to what can be proven correct.

This is not a prediction about market preference or adoption timelines. It is a constraint imposed by physics, law, and reality. Probabilistic systems cannot become authoritative through scale alone. The only open question is how long the industry will continue paying to manage guessing systems after execution, rather than enforcing correctness before it happens.

TL;DR

More data and GPUs no longer fix AI errors. The guessing economy has hit the inference wall, exposing the structural limits of probabilistic systems and the need for deterministic authority.